In-memory computing revolutionizes traditional data processing methods by storing and processing data directly in the main memory (RAM) of a computer system, rather than relying on disk-based storage.

Samsung, a key player in memory technology, has unveiled projections indicating that DDR6 memory could achieve data rates of up to 12,800 MT/s, effectively doubling the maximum data rate of DDR5.

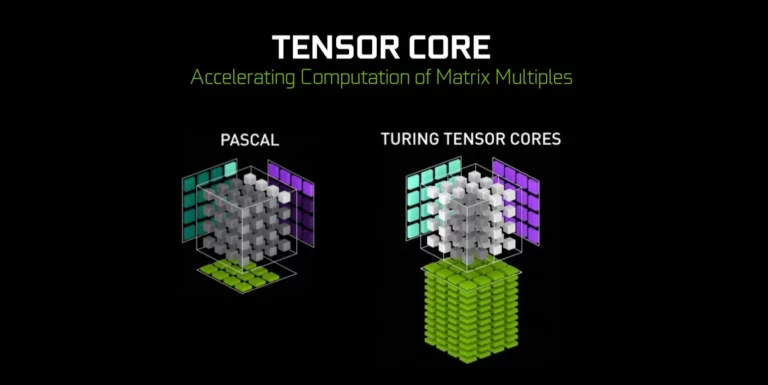

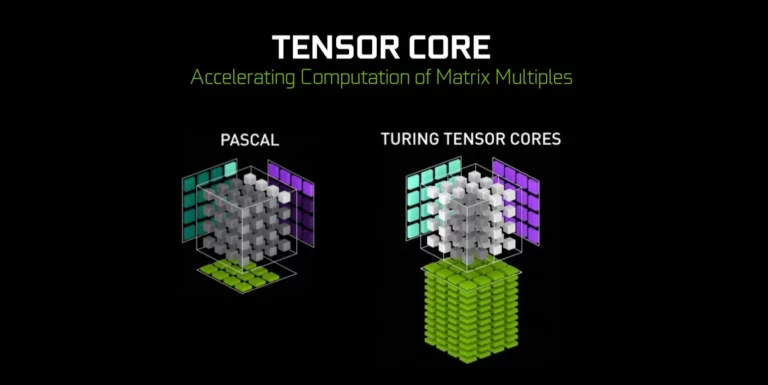

AI models often involve large-scale matrix operations, such as multiplying input data by weight matrices and adding biases. This process forms the basis of forward and backward propagation algorithms used in training neural networks.

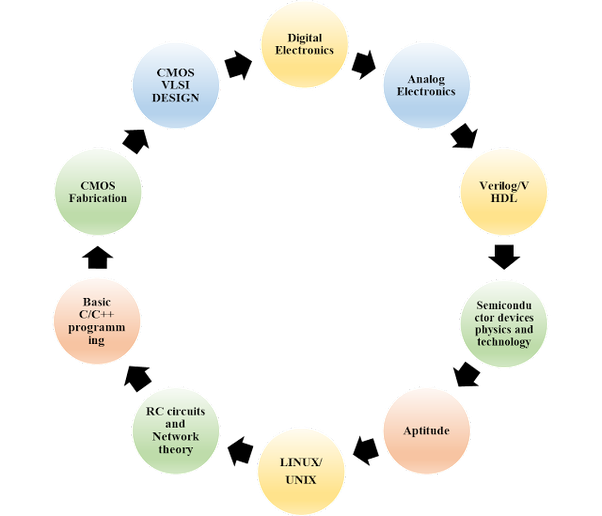

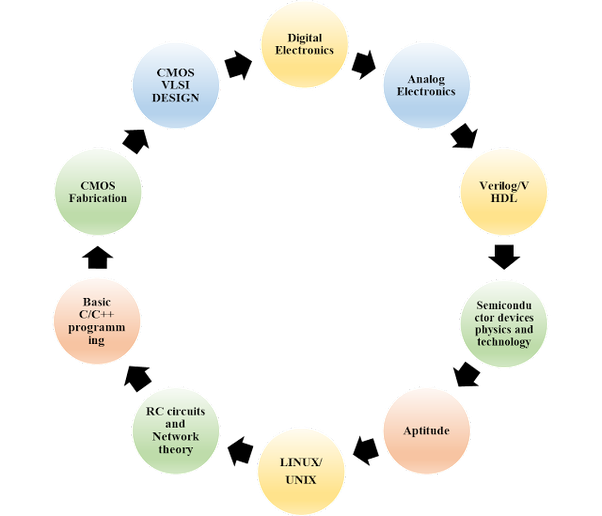

Breaking into this field requires more than just technical know-how—it demands a strategic approach and a commitment to continuous learning.

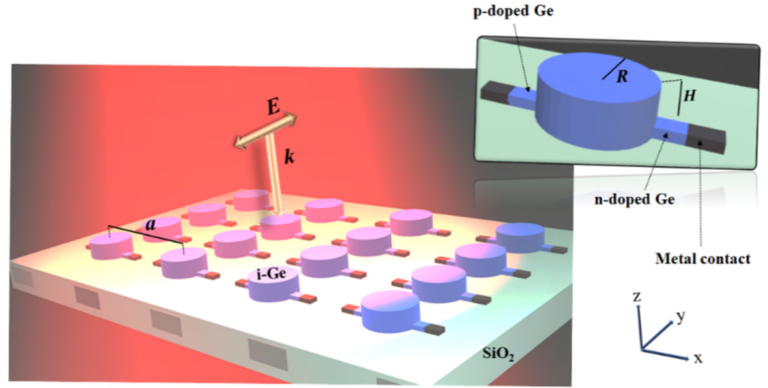

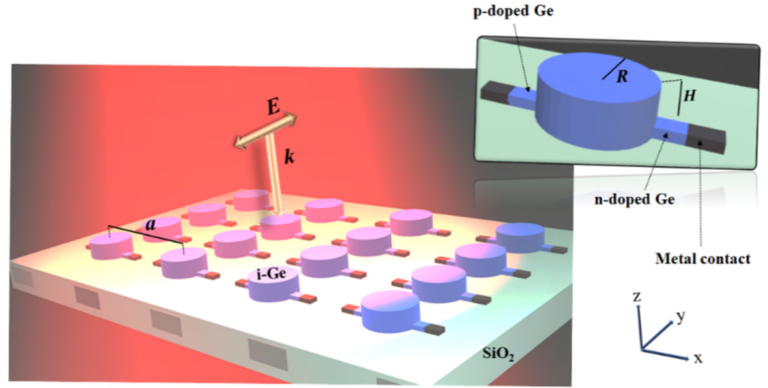

Typically, less than 100 nanometers in diameter, this minuteness allows them to exploit unique quantum effects, enabling enhanced light matter interaction. An interaction highly useful in making Photonic Integrated Circuits.

In the ever-evolving landscape of artificial intelligence, the shift towards edge computing has become a defining trend, and at the forefront of this revolution stands NVIDIA Jetson.

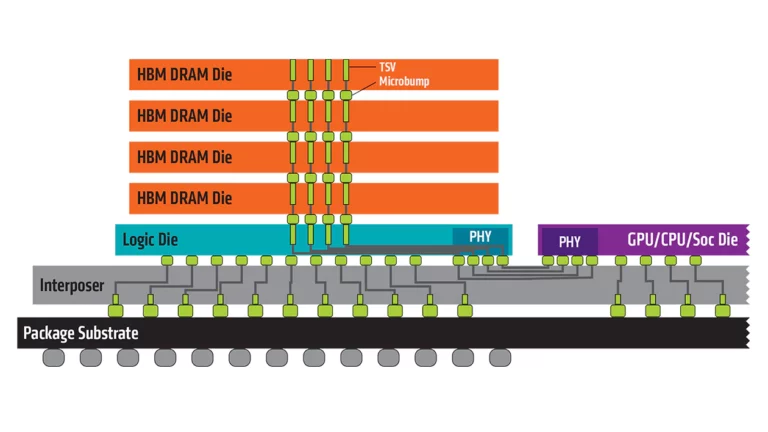

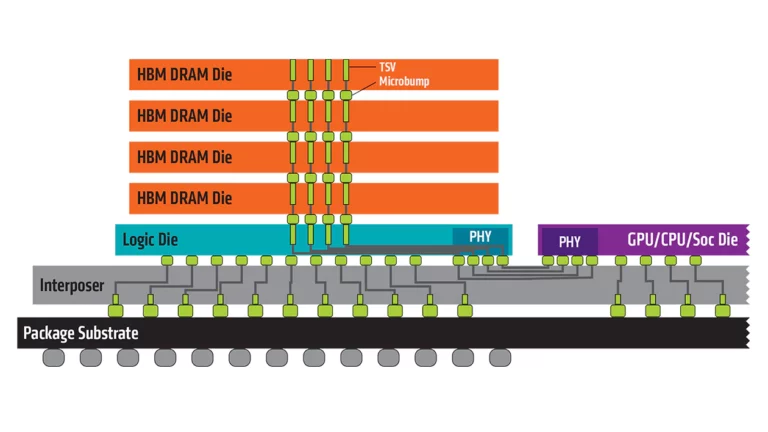

High Bandwidth Memory (HBM) stands as a technological marvel, enhancing the capabilities of high-performance computing.

Delve into the intricate connection between SRAM and artificial intelligence as we unveil seven transformative ways SRAM acts as the backbone, empowering AI with swift thinking, real-time decision-making, and unmatched efficiency.

Elevate your AI hardware career with a Toolkit for an AI Hardware Career algorithms, microcontrollers, electrical engineering, and physics.

Behind the scenes, a highly intricate and choreographed dance unfolds within the confines of a semiconductor fabrication facility, commonly known as a fab.